Tinder's AI Camera Roll Scan: A Retention Gamble Wrapped in Privacy Risks

🕐 Last updated: March 31, 2026

- Tinder revenue fell 6% year-over-year in Q4 2024, with engagement metrics showing particular weakness

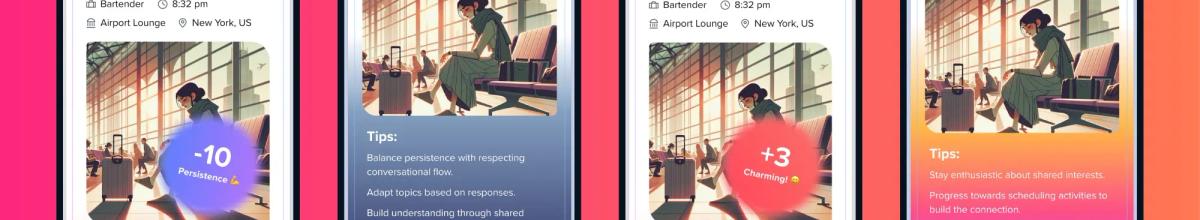

- The app now offers AI-powered camera roll scanning to infer personality traits and curate daily matches

- Match Group has 75 million monthly active users on Tinder, making this pivot far riskier than Hinge's 2016 shift to curated feeds

- Pew research from 2023 found 45% of U.S. dating app users feel 'overwhelmed' by choice

Match Group has just handed Tinder users a choice that neatly encapsulates the current state of the dating industry: grant the app permission to scan your entire camera roll so an AI can infer your personality traits and serve you a curated handful of matches each day, or stick with the infinite scroll that's been slowly suffocating user engagement for years. Neither option sounds particularly appealing, which is rather the point.

The features, unveiled this week, mark Tinder's most aggressive pivot yet away from the swipe-for-volume model that defined the category. According to the company, the new AI tools analyse photo metadata, location data, and visual patterns to build personality profiles, then use those inferences to surface what Tinder describes as 'more meaningful matches' in a limited daily selection. The camera roll analysis happens on-device, the company insists, with no images uploaded to servers.

Strategic desperation meets algorithmic ambition

This is Tinder attempting to solve a problem it created—swipe fatigue—by introducing a different problem that may prove even harder to explain to a userbase that's spent a decade learning to distrust algorithmic curation.

The strategic logic is sound: engagement is slipping, rivals have already moved to curated feeds, and MTCH needs product differentiation that doesn't require rebuilding the entire platform. But asking users to hand over their camera rolls so an AI can psychoanalyse their holiday snaps is a hell of a trade-off, and one that assumes a level of institutional trust Tinder hasn't earned in years.

Create a free account

Unlock unlimited access and get the weekly briefing delivered to your inbox.

AI curation as last resort

Tinder's shift mirrors moves already made by Hinge, which abandoned infinite swiping in favour of a curated feed in 2016, and more recently by apps like The League and Thursday, which explicitly limit daily matches to combat decision paralysis. The difference is scale. Hinge made the pivot when it was a struggling also-ran desperate for differentiation. Tinder is attempting the same manoeuvre with 75 million monthly active users and a brand synonymous with casual abundance.

The timing is commercially transparent. MTCH disclosed in its Q4 2024 earnings that Tinder revenue fell 6% year-over-year, with particular softness in user engagement metrics. Average revenue per paying user ticked up slightly, but payer conversion stalled. The company has been explicit in investor calls that AI-driven personalisation is central to its turnaround strategy, not just for Tinder but across the portfolio.

What's less clear is whether the premise holds. Dating app fatigue is real—research from Pew in 2023 found that 45% of U.S. users reported feeling 'overwhelmed' by choice, and qualitative studies have documented decision paralysis and declining match quality as common complaints. But Hinge's curated feed hasn't delivered the hockey-stick growth story MTCH hoped for when it acquired the app, and anecdotal reports from users suggest frustration with algorithmic gatekeeping has simply replaced frustration with infinite scrolling.

The trust deficit problem

The camera roll component is where the strategy gets genuinely fraught. Tinder claims the analysis is on-device and ephemeral, with no images leaving the phone. That's the same architecture Apple uses for Photos search, and it's technically defensible. But it requires users to trust that implementation, and trust is not a resource Tinder can draw on freely.

The app has faced years of criticism over data practices, from revelations that it sold health data to advertisers to ongoing complaints about opaque algorithmic ranking that privileges paying users. Asking that same userbase to believe that their camera rolls—often containing years of intimate, unfiltered personal history—are safe because Tinder pinky-swears the processing happens locally is a big ask.

Regulatory landmines ahead

The face recognition element introduces a separate layer of risk under both UK GDPR and the EU AI Act, which classifies biometric identification as high-risk and imposes strict transparency and consent requirements. Tinder's opt-in framework likely satisfies baseline consent thresholds, but the company will need to demonstrate that users genuinely understand what they're consenting to, not just clicking through a permission prompt. The Information Commissioner's Office has been increasingly assertive on dark patterns and consent theatre, and dating apps are squarely in its sights following enforcement actions against other platforms for precisely this kind of feature creep.

When your product pitch sounds like a Black Mirror synopsis, you've likely got a messaging problem.

There's also the reputational calculation. Privacy concerns aside, the optics of a dating app asking to scan your camera roll are, to put it mildly, not great. Social media response has ranged from sceptical to openly hostile, with many users pointing out the inherent creepiness of an app analysing photos never intended for public consumption to build psychological profiles.

Match Group clearly believes the user acquisition and retention benefits outweigh the privacy blowback, or it wouldn't have shipped the feature. The calculation is that enough users are sufficiently exhausted by infinite swiping that they'll trade privacy for convenience, and that the resulting engagement lift will justify the controversy. That may even be correct. But it's a narrow path, and one that assumes Tinder's AI is actually good enough to deliver matches that feel meaningfully better than the status quo.

What operators should watch

For the broader industry, this is a test case for how far users will let dating apps into their digital lives in exchange for promises of better outcomes. If Tinder's AI tools drive measurable engagement gains without triggering regulatory action or a user revolt, expect rapid copycatting across the market. If they don't—if opt-in rates are anaemic, or if privacy regulators decide the consent model doesn't pass muster—the industry will have learned an expensive lesson about the limits of AI-driven personalisation when trust is already depleted.

Other operators should be watching three things: opt-in rates, which Tinder has not disclosed but which will tell the real story about user appetite for this level of data access; engagement metrics for users who do opt in, particularly whether the curated matches actually reduce churn; and regulatory response, especially from the ICO and EU authorities who've signalled that biometric processing in consumer apps will face heightened scrutiny under the AI Act. The gap between what's technically legal and what's reputationally survivable has never been narrower.

- This feature represents a critical test of whether users will trade significant privacy concessions for better match quality—the answer will shape the entire dating app industry's AI strategy

- Watch for opt-in rates and regulatory response from the ICO and EU authorities, particularly around biometric processing under the AI Act

- The success or failure of Tinder's pivot will determine whether AI-driven curation becomes industry standard or serves as a cautionary tale about the limits of algorithmic personalisation when institutional trust is already depleted

Comments

Join the discussion

Industry professionals share insights, challenge assumptions, and connect with peers. Sign in to add your voice.

Your comment is reviewed before publishing. No spam, no self-promotion.