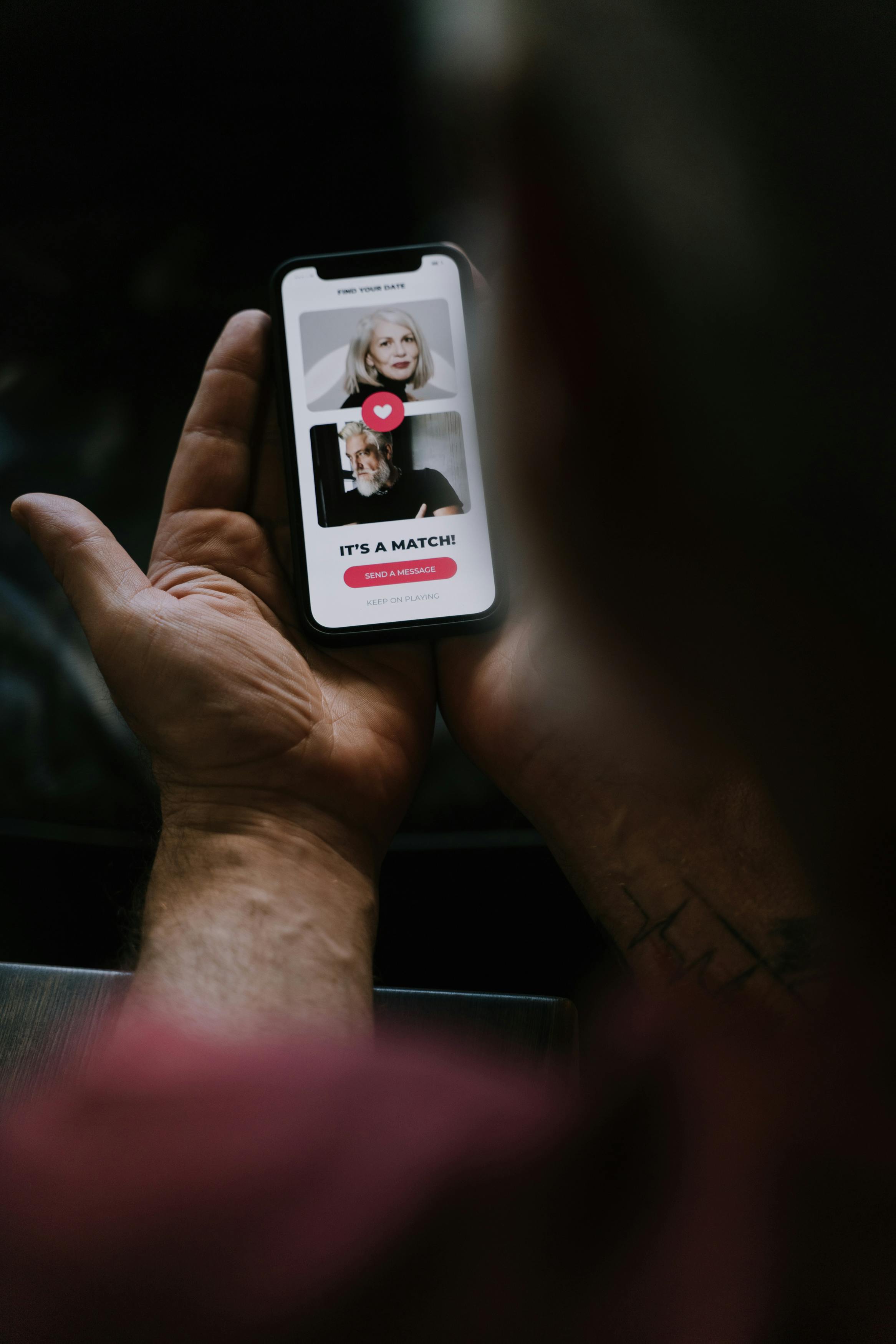

OkCupid's Age Preference Data: The Algorithmic Dilemma Platforms Can't Ignore

- Men's stated age preferences on OkCupid consistently skew towards younger women regardless of the men's own age, whilst women's preferences track their own age throughout their lifespans

- The asymmetry creates a structural mismatch that narrows the viable matching pool, particularly for women over 35

- Major platforms already throttle some user preferences—race filters were removed in 2020—but age has been treated as sacrosanct

- The gap between stated preferences and actual messaging behaviour suggests users are more flexible in practice than their filter settings indicate

Academic research into OkCupid preference data has surfaced a stubborn asymmetry in how men and women filter potential partners by age—and it's the kind of finding that should make product teams at every major platform deeply uncomfortable. According to analysis by mathematician Hannah Fry, men's stated age preferences remain persistently skewed towards younger women regardless of how old the men themselves become, whilst women's preferences track their own age throughout their lifespans. For anyone designing matching algorithms, this creates an immediate problem: do you optimise for what users say they want, or engineer towards outcomes that might actually serve them better?

The pattern Fry identified isn't subtle. Men across age cohorts consistently mark younger women as desirable partners, creating a structural mismatch with women's preferences that narrows the viable matching pool—particularly for women over 35. The implication for conversion rates, time-to-match, and ultimately retention should be obvious.

This is the algorithmic choice that defines whether dating platforms are neutral marketplaces or opinionated products. Every major operator already throttles some user preferences—you can't filter by race on most apps, and height filters remain contentious. Age is the preference set the industry has treated as sacrosanct, but the data suggests that deference may be producing worse outcomes for a significant portion of the user base whilst reflecting patterns that have nothing to do with actual compatibility.

The question isn't whether platforms should intervene. They already do. It's whether they'll acknowledge it.

Create a free account

Unlock unlimited access and get the weekly briefing delivered to your inbox.

Stated preferences versus revealed behaviour

The research draws from preference settings users explicitly configure, which introduces a methodological wrinkle that anyone who's reviewed match-to-message conversion data will recognise immediately. What people say they want and what they respond to in practice frequently diverge. Multiple studies tracking actual platform behaviour—who users message, who they reply to, who they ultimately meet—have shown that stated preferences tend to be more restrictive than actual behaviour.

This gap matters for product design. If the asymmetry Fry identified exists primarily in preference declarations but not in messaging patterns, the algorithm should arguably discount those stated bounds. Platforms already do this selectively. Hinge's 'Most Compatible' feature routinely serves profiles outside user-defined parameters when internal data suggests compatibility markers align.

Bumble's algorithm weights behaviour over declarations. The distinction between respecting user agency and optimising for engagement has always been porous. What makes age different is the sheer scale of the gender divergence and the fact that it maps onto well-documented societal patterns around fertility anxiety, gendered aging, and status asymmetries.

Platforms can't address the root causes, but they absolutely shape whether those patterns get amplified or attenuated in the matching environment.

The opacity problem

None of the major platforms publicly disclose how they weight age preferences against other matching signals, which makes it impossible to assess whether current algorithmic approaches are already compensating for the asymmetry Fry describes or reinforcing it. Match Group (MTCH) properties have historically leant towards strict preference adherence—if you set an age range, you see profiles within it. Bumble (BMBL) has signalled more willingness to override user settings when engagement data suggests a strong match.

Grindr (GRND) doesn't face the same gendered age dynamics but navigates analogous questions around stated versus revealed preferences in other filtering categories. The lack of transparency isn't accidental. Algorithmic inscrutability is a competitive moat and a shield against user backlash.

If Hinge disclosed that it down-ranks profiles from users whose age falls outside your stated range but shows them anyway when compatibility scores are high, some segment of the user base would object. But that objection might reflect precisely the kind of self-limiting preference the algorithm is designed to overcome.

Trust and safety teams, meanwhile, occupy an awkward position. Age verification has become a regulatory requirement across multiple jurisdictions, driven by child safety concerns under frameworks like the UK Online Safety Act (OSA). But verification focuses on minimum thresholds, not on how platforms handle age as a matching input once users are confirmed adults. There's no regulatory pressure to address age-based matching asymmetries, even when those asymmetries produce measurably worse outcomes for specific demographics.

The precedent of other restricted filters

Dating platforms have already established that some user preferences won't be accommodated, regardless of demand. Ethnicity filters were removed or restricted by most major apps in 2020 following sustained criticism that they facilitated racial discrimination. The industry consensus became that enabling those filters—even if users wanted them—was incompatible with platform values and exposed operators to reputational and ethical risk.

Height filters remain available on most platforms despite parallel arguments that they encode discriminatory preferences. The difference appears to be that height filtering doesn't map onto a protected characteristic in the same way race does, and the gender dynamics are more evenly distributed—both men and women filter by height, even if the thresholds differ.

Age sits somewhere between these cases. It's not a protected characteristic in the dating context for adults. It correlates with fertility, which gives it a biological dimension other preference categories lack. But the asymmetry is so pronounced, and the effect on match availability for older women so measurable, that treating it as a neutral user input starts to look like a choice rather than a necessity.

What intervention could look like

Product teams have several levers available that fall well short of removing age preferences entirely. Platforms could apply a discount factor to age-based filtering, similar to how some apps already handle distance preferences—showing a small percentage of slightly-out-of-range profiles when other signals are strong. They could introduce friction, requiring users to articulate why age matters to them or surfacing data on how expanding age ranges affects match rates.

They could shift from hard filters to weighted preferences, where age becomes one input among many rather than a binary gate. The challenge is that any intervention requires platforms to take a position on whether the revealed preference pattern is a problem that needs solving.

If the asymmetry simply reflects what men and women want—and if catering to stated preferences maximises short-term engagement—then algorithmic neutrality remains the path of least resistance. If, however, the pattern produces structural disadvantages for a large user segment whilst reflecting biases that don't predict actual compatibility, then designing around it starts to look like product malpractice.

Operators will face this question with increasing frequency as algorithmic accountability becomes a regulatory and reputational expectation. The EU Digital Services Act (DSA) mandates transparency around recommender systems for very large platforms, and the Online Safety Act introduces duty-of-care obligations that could plausibly extend to how matching algorithms affect user wellbeing. The industry's instinct has been to treat algorithms as neutral systems that reflect user input.

That framing becomes harder to sustain when the data shows systematic patterns that disadvantage specific groups—and when the platform has already demonstrated willingness to override user preferences in other contexts. The Fry analysis won't force immediate product changes, but it sharpens a question the industry has been avoiding: whether dating platforms are markets that should efficiently clear supply and demand as stated, or designed experiences that should optimise for outcomes beyond immediate user preference satisfaction.

Match availability for women over 35, long-term relationship formation, and equitable access to the matching pool are all measurable outcomes. Whether they're outcomes the industry chooses to optimise for remains an open question.

- The core question facing dating platforms is whether they function as neutral marketplaces or designed experiences—algorithmic transparency requirements under the DSA and OSA will force operators to articulate this position publicly

- Watch for product changes that introduce friction or weighting to age preferences rather than hard filters, particularly from platforms like Bumble that have already signalled willingness to override stated preferences

- The precedent set by age preference handling will likely inform how platforms approach other systematic matching asymmetries as algorithmic accountability becomes a competitive and regulatory expectation

Comments

Join the discussion

Industry professionals share insights, challenge assumptions, and connect with peers. Sign in to add your voice.

Your comment is reviewed before publishing. No spam, no self-promotion.