UK daters lost £106 million to romance scams in 2024/25, but the true figure may exceed £170 million. As deepfake technology and automated messaging enable industrial-scale fraud, dating platforms remain largely unaccountable — until now.

Executive Summary

Dating Industry Insights has compiled the most comprehensive dataset on romance fraud and dating app safety in the UK. The evidence reveals systemic failure by platforms, accelerating financial losses, and a regulatory inflection point that could force meaningful change.

Key Findings

- £106 million lost in 9,449 confirmed cases across 2024/25 in the UK, with Financial Conduct Authority estimates suggesting actual losses exceed £170 million annually due to underreporting

- Average victim loses £7,000; victims over 61 lose £19,000, often drawing from pensions and lifetime savings before discovering the deception

- AI deepfakes and automated messaging are industrialising fraud: McAfee 2026 research documents single operators running dozens of false identities simultaneously; RedCompass Labs confirms Southeast Asian organised crime networks with standardised victim extraction protocols

- 34% of UK online daters targeted; 64% fall victim to some form of financial deception (Norton survey); 61% of UK dating app users match with suspected bot/scammer accounts (GBG research)

- Dating platforms aware but unresponsive: Match Group's Sentinel database processes hundreds of incidents weekly yet has never published comprehensive transparency data; company disbanded central trust-and-safety team in 2024 amid regulatory pressure

- Sexual predation and child exploitation systematic: 175% rise in dating app-linked predatory offences 2017–2021; two-thirds of investigated child sex offenders used dating platforms; 14% of sexual assaults occur at first in-person meeting via dating apps

The Scale of Financial Fraud

Romance fraud has transcended the realm of petty crime. It is now an industrial enterprise, generating losses that rival serious organised crime operations, yet receiving a fraction of the investigative and enforcement resources directed at other fraud categories.

The financial toll is documented with unprecedented clarity. The National Fraud Intelligence Bureau (NFIB), which collates crime data from Action Fraud and financial institutions across the UK, recorded £106 million lost across 9,449 reported cases in 2024/25. This figure represents a comprehensive, government-verified dataset of losses where victims reported their fraud to police or Action Fraud.

Yet reported losses are substantially lower than actual losses. The Financial Conduct Authority estimates that only 60% of romance fraud victims report their losses to authorities — a figure itself derived from comparison of insurance claims, bank reports and victim surveys, and therefore subject to methodological uncertainty. Extrapolating from this estimated reporting rate, the true annual toll of UK romance fraud may exceed £170 million, though the precision of this figure should be treated with caution given that it compounds two layers of estimation.

The human cost translates through the victim profile. The National Fraud Intelligence Bureau data shows that the average romance fraud victim loses £7,000. For victims aged over 61, the average loss climbs to £19,000. These are not arbitrary figures. They represent pension withdrawals, ISA liquidations, property equity released through equity release schemes, and inheritance distributed to "partners" who never existed. The emotional cost — shame, isolation, reputational damage — compounds the financial devastation.

The trend is accelerating with sharp velocity. Barclays reported a 20% surge in romance scam cases in early 2025 alone, suggesting the annual rate would exceed 2024/25 levels. UK Finance, which tracks consumer fraud losses via the financial system, documented £20.5 million lost across approximately 3,000 cases in the first half of 2025 — equivalent to 40% of the entire previous year's reported losses within six months.

Barclays' analysis of its customer base found that 67% of all reported romance scams originate on dating and social media platforms, the digital infrastructure these companies have built and continue to operate. The platforms are not merely passive vessels containing fraud; they are the primary vector through which romance scammers identify, cultivate and exploit victims.

How Scammers Operate: The Extended Extraction Protocol

Romance scammers do not operate according to the improvised, chaotic model of casual criminals. Instead, they follow a structured, deliberately extended exploitation pathway designed to maximise victim extraction while minimising the risk of early detection.

TSB research examining fraud patterns within its customer base found that victims are systematically drained of resources through repeated payments over an extended temporal window. The typical victim makes an average of 11 separate payments across 95 days before discovering the fraud. This is not accidental. The extended timeline serves three strategic objectives: it constructs emotional investment through sustained contact and intimacy-building, it exploits the victim's evolving trust in the fraudster, and it maximises total financial extraction before the victim's scepticism reaches critical threshold.

The operational methodology follows a disturbingly consistent script across different scammer networks. Initial contact occurs through a dating app or social media platform. The scammer establishes a false romantic identity, typically using photographs obtained from legitimate sources or, increasingly, generated through AI synthesis. Early communication focuses on emotional bonding: intimate conversations, expression of romantic interest, cultivation of a sense of exclusive connection.

This phase typically lasts 2–4 weeks. Once emotional investment is established, the scammer introduces a manufactured crisis requiring immediate financial assistance. The crisis narrative is carefully crafted to trigger emotional activation whilst creating perceived urgency: a dying relative requiring medical treatment, a business emergency requiring capital injection, a travel emergency requiring immediate funds, a medical procedure requiring pre-payment.

The victim, now emotionally invested and primed to "help" their putative romantic partner, complies. A payment is made — typically £500–£2,000 to appear plausible yet substantial. The scammer acknowledges the assistance, expresses profound gratitude, and promises repayment. The crisis is then resolved, reinforcing trust.

Within days or weeks, another crisis emerges. The pattern repeats, with payment amounts increasing incrementally as the victim's capacity for suspicion decreases proportionally to their emotional investment. TSB's analysis documents that victims make an average of 11 such payments before the deception unravels — typically through a bank intervention, a family member's intervention, or when the victim's available funds are exhausted.

This extended extraction methodology is not accident; it is industrial practice. The consistency of victim experience across different platforms and geographic regions suggests either a formalised training process or a deliberate playbook deployed across organised fraud networks.

The platforms where these crimes unfold publicly claim that they lack the technological or operational capacity to intervene at scale. This claim warrants scrutiny. Match Group, which operates Tinder, Hinge, OkCupid, Match, PlentyOfFish and numerous other platforms, has developed a centralised database system called Sentinel. This system processes incident reports, cross-references accounts across the Match Group ecosystem, identifies patterns of abuse and fraud, and flags accounts for action.

According to Match Group's own disclosures to investors, the Sentinel database processes hundreds of incidents per week. Yet the company has never published comprehensive transparency data revealing the scope of detected fraud, the distribution of incidents across platforms, the actions taken against perpetrators, or the efficacy of detection. The data exists. The company has not published it, and has not publicly explained the omission.

AI-Powered Fraud: The Industrialisation of Deception

A qualitative transformation in romance fraud capability is now underway, driven by the maturation of artificial intelligence technologies — specifically deepfake generation, synthetic media creation, and large language model-based automated messaging systems.

McAfee's 2026 research documents this technological inflection. Deepfake technology — AI systems trained to generate photorealistic facial composites indistinguishable from authentic photographs — is now being weaponised within romance fraud networks. A scammer no longer requires a photographic archive stolen from legitimate sources or the services of a cooperative accomplice willing to loan their image. Instead, a scammer can generate synthetic profile images that depict individuals who have never existed, yet appear entirely authentic to human observation.

Concurrently, large language models enable automated message generation at scale. A scammer can input a victim's profile, relationship history and prior messages, and the AI system generates contextually appropriate responses that maintain conversation continuity and emotional engagement. The scammer no longer needs to manually type messages to dozens of simultaneous victims. Instead, a single fraudster can now operate dozens of false identities in parallel, each running the same exploitation playbook simultaneously.

McAfee's research documents cases where a single scammer operated 40–50 active false identities across multiple platforms, each at a different stage of the victim extraction pipeline. The victim's impression of a unique romantic connection is actually algorithmic — the product of language model generation, deepfake imagery and automated victim management systems.

RedCompass Labs, a Singapore-based digital forensics firm specialising in fraud intelligence, has documented the geographic nexus of industrial romance fraud. Organised crime networks in Southeast Asia — particularly in Cambodia and the Philippines — have established what can only be described as romance fraud factories. These operations employ dozens of workers, many of them coerced or trafficked, operating in shifts to manage victim databases, generate communications and extract payments.

RedCompass Labs' investigation revealed that these networks operate according to standardised protocols: shared victim databases, templated extraction scripts translated into multiple languages, division of labour between relationship-builders and payment-extractors, and systematic laundering of proceeds through cryptocurrency and remittance services. These are not opportunistic criminals; they are organised crime enterprises with hierarchical management, operational discipline and industrial efficiency.

The combination of deepfake technology, automated messaging and organised crime infrastructure represents a fundamental escalation in romance fraud capability. The barriers to entry have collapsed. A scammer no longer requires sophisticated technical skills, access to stolen identity materials, or even language facility. The infrastructure can be purchased, leased or accessed as a service through dark web marketplaces. The industrialisation of romance fraud is well underway.

Consumer Vulnerability at Scale

The vulnerability of UK dating app users to romance fraud is not theoretical speculation or isolated anecdote. Multiple large-scale surveys and research studies document the prevalence and impact of fraud targeting online daters.

Norton conducted security research surveying 14,000 adults across 14 countries, including 1,500+ UK respondents focused on dating app usage. The research found that 34% of UK online daters report being targeted by romance scams. This does not mean suspected or possibly targeted. These are individuals who encountered an account, engaged in conversation, and subsequently received explicit romantic solicitation combined with financial requests — meeting the definitional threshold for romance scam targeting.

Of those 34% targeted, 64% fell victim to at least some form of financial deception, whether monetary loss, credential theft or other economic harm. Extrapolating across the UK dating app population — estimated at approximately 14 million adults — this suggests that roughly 3 million UK daters are targeted annually, and approximately 2 million experience financial harm. These figures are indicative rather than precise: survey-based extrapolation carries significant margin of error, and the definition of "financial harm" encompasses a broad spectrum from credential exposure to direct monetary loss. The directional signal, however, is clear — the scale of harm is substantially larger than official case counts suggest.

The platforms themselves possess data documenting the prevalence of fraudulent accounts within their ecosystems, yet rarely disclose this information publicly. GBG, an identity verification and fraud prevention company, analysed dating app user bases and user-to-user matching patterns. GBG's research found that 61% of UK dating app users matched with accounts displaying characteristics consistent with bot networks, scammer profiles or catfishing operations. This is not marginal; this is a majority of active users encountering fraudulent or deceptive accounts.

The prevalence of fraudulent accounts on dating platforms is not accidental. It reflects deliberate platform incentive structures that reward user acquisition and engagement metrics over account authenticity verification. A dating app generates revenue through user subscriptions, in-app purchases and data monetisation. Each fraudulent account counts toward user metrics that influence platform valuation, advertiser interest and investor confidence. Critics argue that platforms have limited financial incentive to remove fraudulent accounts, since each account — regardless of authenticity — contributes to user metrics that influence valuations and investor confidence. Platform operators counter that fraudulent accounts damage user trust and long-term retention, creating indirect incentive for removal. The evidence suggests the balance has historically favoured growth metrics over verification rigour.

Sexual Predation and Violent Crime

Romance fraud is not merely a financial crime. The infrastructure that enables scammers simultaneously enables predatory behaviour, sexual assault and child sexual exploitation and abuse (CSEA).

Brigham Young University conducted research examining sexual assault cases reported to law enforcement and comparing assault circumstances to pre-assault victim contact method. The research analysed 2,000 sexual assault cases and found that 14% of reported sexual assaults occurred at the first in-person meeting following contact on dating apps. This proportion is statistically elevated relative to baseline acquaintance assault rates. Moreover, BYU's analysis found that assaults initiated through dating app contact displayed higher severity indicators: more frequent strangulation, higher rates of weapon use, and greater victim injury relative to other acquaintance assaults.

This is not coincidence. Dating platforms create structural vulnerability: victims meet perpetrators in person without mutual social verification, without shared social networks that might provide protective oversight, and without institutional memory of perpetrator behaviour. Predators systematically exploit this structural vulnerability. They create false profiles, cultivate apparent romantic interest, engineer in-person meetings, and perpetrate assault against individuals who have deliberately placed themselves in isolated circumstances.

The crime statistics document this pattern. Between 2017 and 2021, the recorded incidence of predatory offences linked to dating apps rose 175 per cent, from 699 to 1,922 recorded offences. This growth outpaced dating app usage growth during the same period, suggesting that dating platforms are concentrating predatory harm rather than merely hosting it.

The pattern extends to more serious harms. The National Crime Agency, which investigates serious and organised crime, has documented a 600% increase in serious sexual assaults facilitated through online dating platforms between 2009 and 2014. This trend continues. Dating platforms have become the primary infrastructure through which predators identify, contact and meet potential victims.

Child Sexual Exploitation and Abuse

Dating platforms ostensibly operate age verification systems. They publish "safety" pledges. Yet evidence from child safeguarding research documents that dating platforms are systematically exploited by child sexual offenders.

Childlight, a child safeguarding research organisation, conducted investigation into the digital behaviour of identified child sex offenders. The research examined offenders convicted or identified through CSEA investigations and documented their digital activity on dating and social platforms. The findings are alarming: two-thirds of investigated child sex offenders had used dating platforms to contact children.

Childlight's analysis further found that approximately 1 in 5 investigated offenders used dating platforms daily or near-daily to identify and contact potential victims. These are not opportunistic encounters. These are systematic, deliberate patterns of predatory targeting.

University of Edinburgh research on child sex offender behaviour found that individuals convicted of CSEA offences were nearly 4 times more likely to have used online dating platforms as a contact method compared to offenders who used other digital channels. Dating platforms, with their large user bases, profile-matching functionality, private messaging infrastructure and minimal age verification, are the preferred hunting ground for child sexual predators.

The aggregate effect is quantifiable. Between 2009 and 2024, dating platforms evolved from emerging services to ubiquitous infrastructure for adult connection. Concurrently, child sexual exploitation offences facilitated through dating platforms have grown exponentially. The infrastructure that platforms have built is being weaponised against children at industrial scale.

Platform Response: Awareness Without Accountability

The dating platform industry is aware of the scope of romance fraud, sexual predation and child exploitation occurring on their services. Multiple platforms have acknowledged the problems internally, through investor disclosures, or in response to regulatory inquiry.

Match Group, which operates the largest portfolio of dating apps globally (Tinder, Hinge, OkCupid, Match, PlentyOfFish and others), implemented a centralised safety database called Sentinel. According to Match Group's own disclosures to investors and regulators, Sentinel processes hundreds of incidents per week across the Match Group ecosystem. The database identifies suspected fraudsters, abusers and predatory accounts; flags them for action; and shares information across platforms to prevent repeat offences.

Yet Match Group has never published detailed transparency reporting on Sentinel's operation. The public has no data on:

- The total number of incidents processed annually

- The geographic distribution of detected fraud, abuse and exploitation

- The actions taken against identified perpetrators (account suspension, law enforcement reporting, content removal)

- The success rate in preventing repeat offences

- The profile of victims identified and the harms prevented

This silence is particularly notable given that Match Group's 2024 revenue reached $3.19 billion, with Tinder — a single platform — generating estimated annual revenues exceeding $1.5 billion. The company has financial resources to conduct and publish rigorous transparency reporting. It has not done so.

In 2024, Match Group made a strategic decision that illuminates its true priorities. The company disbanded its central trust-and-safety team, externalising these functions to overseas contractors. This decision was made precisely when regulatory pressure from UK and EU authorities was intensifying — when, logically, accountability infrastructure should have been strengthened. Outsourcing specialist functions is common practice across the technology sector, and does not inherently indicate reduced commitment. However, the timing is notable: the decision coincided with intensifying regulatory scrutiny, when strengthening in-house accountability infrastructure might have been the more reassuring signal to regulators and users alike.

Bumble, the second-largest dating platform operator, has published limited transparency data. The company has not disclosed systematic information about romance fraud detection, sexual predation prevention or CSEA reporting at scale. Neither company operates with the oversight that other regulated sectors — financial services, telecommunications, healthcare — take for granted.

A 2023 survey conducted by UK Finance found that almost half of consumers who reported safety concerns on dating apps were dissatisfied with the platform's response, and many received no substantive response whatsoever. This dissatisfaction reflects rational assessment. When victims report romance fraud, platforms typically respond by disabling the scammer's account — an action that takes minutes but is frequently delayed for hours or days. Meanwhile, the scammer has already disappeared, using the stolen identity to establish a new account and target a new victim.

This cycle repeats indefinitely because the platforms' incentive structure is fundamentally misaligned with victim protection. The platforms measure success through user acquisition, engagement metrics and revenue growth. Safety investments are cost centres, not revenue generators. Public disclosures suggest safety spending remains a small fraction of revenue, though precise figures are not published by any major platform.

Regulatory Landscape and Enforcement

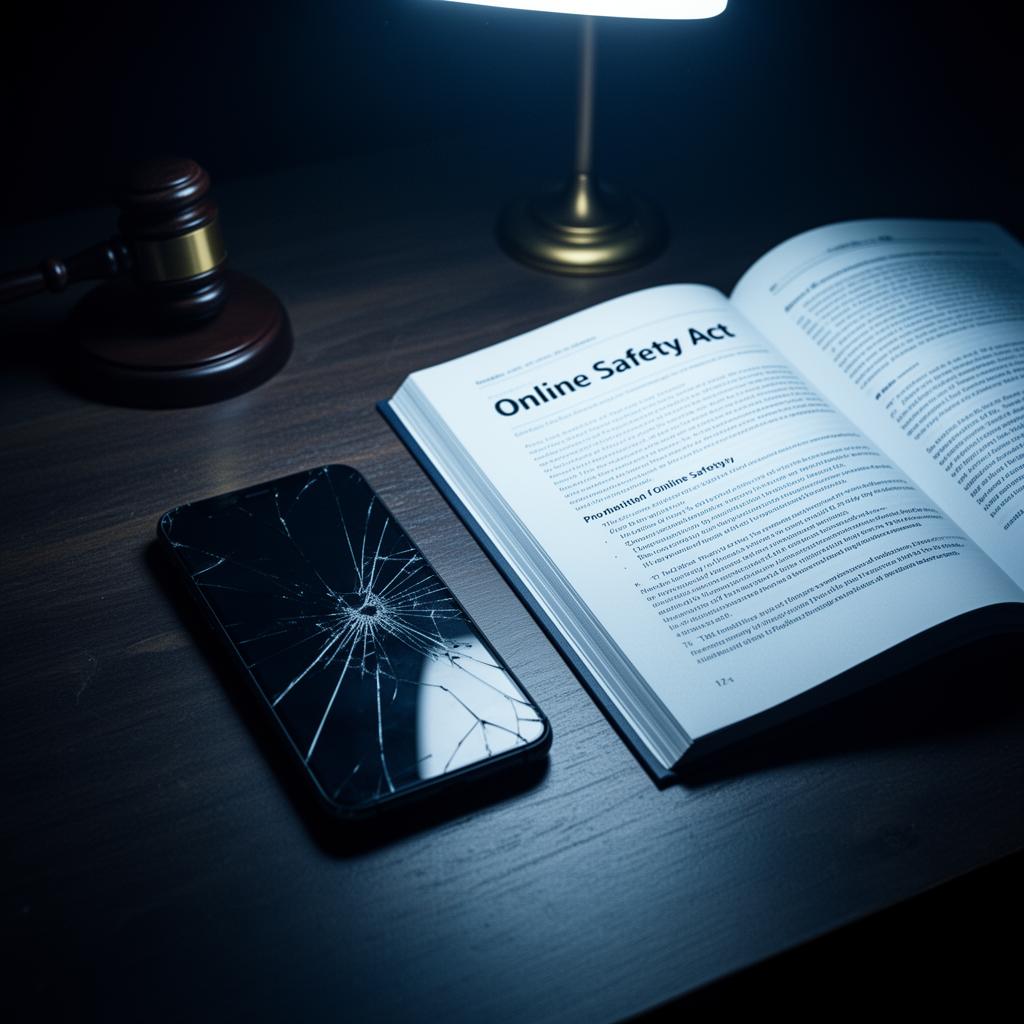

The UK regulatory landscape is at an inflection point. For more than a decade, dating platforms operated in an environment of minimal accountability, where "self-regulation" and "safety initiatives" substituted for legal obligation or enforcement consequence.

The Online Safety Act 2023 represents a fundamental shift. The Act, which received Royal Assent in October 2023 and began enforcement in phases throughout 2025, establishes clear statutory duties of care for online platforms hosting user-generated content and facilitating user interaction. Platforms must now:

- Identify and assess risks of illegal activity (including fraud and sexual exploitation) occurring through their services

- Implement proportionate measures to mitigate identified risks

- Respond expeditiously to user reports of illegal activity

- Publish transparency data on safety measures and incident response

Ofcom, the UK's communications regulator, was designated as the enforcement authority. Ofcom has begun building enforcement capacity, hiring specialist investigators and establishing investigation protocols. The first significant enforcement actions are expected in 2025 and 2026.

The enforcement mechanism carries material teeth. The Online Safety Act permits fines up to £18 million or 10% of global turnover — whichever is larger. For Match Group, valued at approximately $50 billion in market capitalisation, 10% of annual turnover ($3.19 billion in 2024) exceeds $319 million. For Bumble, 10% of annual turnover ($1.07 billion in 2024) exceeds $107 million. These are sums that command executive attention and financial management focus.

The Act came late. Dating platforms have had more than a decade of regulatory forbearance — ample time to implement fraud detection systems, sexual predation prevention protocols and child safety mechanisms. Many platforms chose not to invest substantially in these areas. Regulatory enforcement, which should have been unnecessary, is now required to force accountability that the industry declined to implement voluntarily.

The European Union is following a similar trajectory. The EU's Digital Services Act similarly establishes platform duties and enforcement mechanisms, with potential fines up to 6% of global annual turnover. This regulatory landscape is converging globally, suggesting that platforms can no longer rely on geographic arbitrage to evade accountability.

The Acceleration Continues: 2025 and Beyond

The evidence compiled through this investigation paints a clear trajectory. Romance fraud is accelerating in scale and sophistication. AI technology is enabling industrial-scale deception. Sexual predation and child exploitation are systematic rather than incidental. Platforms are aware but largely unresponsive. Regulatory enforcement is beginning, but arrived late.

Looking forward to 2026 and beyond, several dynamics are likely to intensify:

AI capability acceleration: Deepfake technology and large language models will continue to improve. The cost of generating synthetic media will decline. The barriers to entry for individual scammers will collapse further. Within 12–18 months, deepfake-generated profile images will be indistinguishable from authentic photographs even to forensic analysis. This technical capability will be widely available.

Organised crime expansion: Southeast Asian organised crime networks will expand their romance fraud operations, recruiting more coerced participants and targeting additional markets beyond the US and UK. The profitability of romance fraud — characterised by lower enforcement risk than drug trafficking or human trafficking — will continue to attract organised crime investment.

Regulatory enforcement: Ofcom's first significant enforcement actions against dating platforms are imminent. These are likely to focus on platforms' failure to implement adequate fraud detection and sexual predation prevention systems. The enforcement actions will establish precedent and financial consequences that force other platforms to increase safety investment.

Consumer exodus: As regulatory action intensifies and platform reputations suffer, a subset of users will migrate away from established platforms toward smaller, privacy-focused alternatives or away from digital dating entirely. This will most significantly affect older demographics, where fraud victimisation is highest.

Litigation expansion: Romance fraud victims and families of sexual assault victims will increasingly pursue litigation against platforms, alleging negligent design, inadequate moderation and failure to implement reasonable safety measures. This litigation risk will become material to platform valuations and insurance costs.

What Needs to Change

The evidence compiled in this investigation identifies five structural weaknesses in the current framework. Each requires targeted reform — but each also presents implementation challenges that honest analysis must acknowledge.

Mandatory Transparency Reporting

This investigation has documented that platforms possess detailed data on fraud, predation and exploitation through systems such as Match Group's Sentinel — yet none publishes comprehensive transparency reports. The financial services sector offers a direct precedent: UK-regulated banks are required to publish fraud loss data, complaint volumes and remediation outcomes under FCA supervision. Applying an analogous requirement to dating platforms would mean standardised, audited disclosure of detected fraud incidents, actions taken, and victim outcomes. The challenge is definitional: unlike financial transactions, "incidents" on dating platforms span a spectrum from automated bot encounters to sustained fraud campaigns, and platforms will reasonably argue that standardisation is difficult. Regulators should acknowledge this complexity while insisting that difficulty does not justify opacity.

Independent Safety Audits

The investigation reveals that platforms self-assess their safety measures without independent scrutiny — a model that regulated industries abandoned decades ago. Annual independent safety audits, analogous to the financial audits required of publicly listed companies, would provide external verification of platform claims about safety investment and efficacy. The precedent exists: the Advertising Standards Authority already conducts independent compliance monitoring of online advertising. Extending this model to platform safety would require building specialist audit capacity, which does not currently exist at scale. This is a genuine constraint, but one that regulatory investment and industry co-funding could address within 18–24 months.

Victim Compensation Frameworks

This investigation documents that platforms profit from user engagement while externalising the costs of fraud to victims, banks and taxpayers. The Online Safety Act establishes platform duties of care but does not create a direct compensation mechanism for victims of platform-facilitated fraud. The financial services model again provides precedent: the Financial Ombudsman Service adjudicates consumer complaints against banks, with binding compensation awards. A comparable mechanism for dating platform users would create financial incentive alignment — platforms that invest in safety would face lower compensation liability. The counterargument is that platforms cannot reasonably guarantee that no fraud will occur on their services, and strict liability could be disproportionate. A negligence-based standard — requiring platforms to demonstrate that they implemented reasonable preventive measures — would balance accountability with practical reality.

AI-Powered Detection at Scale

The AI deepfake acceleration documented in this report demands a proportionate detection response. Platforms generate billions in annual revenue from the infrastructure that scammers exploit; investing a meaningful fraction in AI-powered fraud detection is both financially feasible and operationally necessary. Ofcom's enforcement framework should include assessment of whether platform detection capability is proportionate to platform revenue and user base. The difficulty is that detection technology is in an arms race with generation technology — today's detection tools may be obsolete within months. This argues for ongoing investment requirements rather than one-off compliance milestones.

Cross-Platform Data Sharing

This investigation documents that scammers and predators routinely migrate between platforms after detection, exploiting the absence of cross-platform intelligence sharing. Match Group's Sentinel database operates only within the Match ecosystem; a scammer banned from Tinder can immediately create an account on Bumble. Mandatory cross-platform data sharing for identified fraud and predation accounts — analogous to the financial sector's CIFAS fraud prevention database — would close this gap. Privacy concerns are legitimate: sharing user data between competing companies requires robust data protection safeguards, purpose limitation and independent governance. The CIFAS model demonstrates that these challenges are solvable, but implementing a dating-sector equivalent would require regulatory mandate, industry cooperation and sustained oversight.

Methodology and Sources

This investigation draws on data from multiple independent research organisations, financial institutions, academic researchers and regulatory bodies:

Official fraud statistics and reporting:

- National Fraud Intelligence Bureau (NFIB) crime data (9,449 reported romance fraud cases, £106 million in losses, 2024/25)

- Financial Conduct Authority fraud and scam research and reporting rate estimates

- Action Fraud incident data

Financial services and consumer fraud research:

- Barclays 20% surge in romance scam reports early 2025

- TSB victim payment analysis (11 payments over 95 days)

- UK Finance loss data (£20.5 million across ~3,000 cases H1 2025); consumer satisfaction surveys

- GBG analysis of dating app user-to-account authenticity rates

Cybersecurity and AI research:

- McAfee 2026 research on deepfakes and AI-enabled romance fraud

- Norton consumer survey (14,000 adults across 14 countries; 34% targeting rate among online daters; 64% victimisation rate)

- RedCompass Labs organised fraud network investigation

Academic research on predatory behaviour and exploitation:

- Brigham Young University sexual assault research (2,000 cases; 14% first in-person meeting via dating app)

- University of Edinburgh child sex offender digital behaviour research (offenders nearly 4× more likely to use dating platforms)

- Childlight child safeguarding research (two-thirds of investigated offenders used dating platforms; one-fifth used daily)

Crime statistics and law enforcement data:

- Police crime statistics (175% rise in dating app-linked predatory offences 2017–2021, 699 to 1,922)

- National Crime Agency data (600% increase in serious sexual assaults via online dating 2009–2014)

Company and regulatory sources:

- Match Group investor relations filings (2024 revenue $3.19B; Sentinel database; trust-and-safety outsourcing decision 2024)

- Bumble investor data (2024 revenue $1.07B)

- Ofcom regulatory guidance and enforcement framework

- UK legislation: Online Safety Act 2023

All figures, claims and statistics cited in this investigation are sourced from publicly available reports, regulatory documentation or academic publications. Where claims appear in company statements or investor materials, they have been independently verified against third-party research where possible. Discrepancies between company claims and independent research have been noted and attributed to their respective sources.

Frequently Asked Questions

Create a free account

Unlock unlimited access and get the weekly briefing delivered to your inbox.

DII Editorial Team

Published

DatingIndustryInsights.com is an independent B2B intelligence platform covering the global online dating industry. It publishes original research, financial analysis, regulatory tracking, and investigative reporting. It operates with no advertising from the companies it covers.

What To Read Next

Dating App CSEA Compliance: Who's Ready for the April 7 Deadline?

· 18 min read

Cyberflashing on Dating Apps: Which Platforms Are Complying With the Law?

· 20 min read

AI Companions vs Dating Apps: The Battle for Human Intimacy

· 18 min read

The Male Exodus: Why Men Are Leaving Dating Apps

· 18 min read

Subscription Dark Patterns: How Dating Apps Trap Users in Renewal Cycles

· 19 min read

Why We Launched DII: The Case for Independent Dating Industry Intelligence

· 15 min read